spacetimeid

These days, the quiet times in my life are waiting for large databases to be imported or exported or watching as large files are transferred across the network. I had one of those the other night so I cobbled together another small, bespoke AppEngine tool: spacetimeid

is very simple web application to encode and decode unique 64-bit numeric identifiers for a combined set of x, y and z coordinates.

Another way to think about that is as a unique ID for any given point at a specific time anywhere on Earth.

For example, the corner of 16th and Mission, in San Francisco, at around midnight the night/morning of March 17, 2010 would be encoded like this:

GET http://spacetimeid.appspot.com/encode/-122.419304/37.764832/1268809061 <rsp stat="ok"> <spacetime y="37.764832" x="-122.419304" z="1268809061" id="4726418083411316312844298485971"/> </rsp>

To decode a unique ID back in to space and time coordinates, you'd do this:

GET http://spacetimeid.appspot.com/decode/4726418083411316312844298485971 <rsp stat="ok"> <spacetime y="37.764832" x="-122.419304" z="1268809061" id="4726418083411316312844298485971"/> </rsp>

I'm not entirely sure what I'll use these for yet, so don't worry if you're wondering too. I just like unique identifiers because they make it easy (possible) to connect lots of little pieces in order to create new things, so I figured I would share. It's not perfect but it's a start.

Enjoy: http://spacetimeid.appspot.com/

Update: At Gary's request, I've added a /woe endpoint that allows to encode and decode (WOE ID + time) pairs, too!

This blog post is full of links.

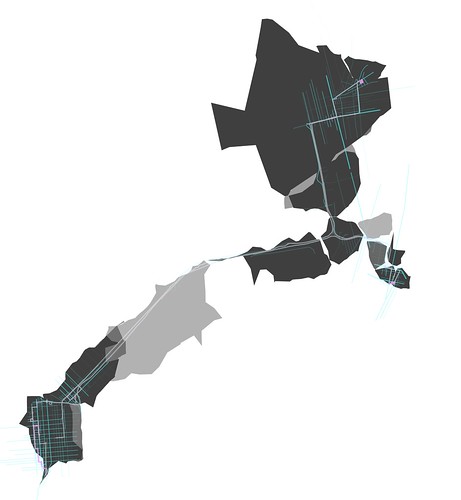

#spacetimefrom piratemaps import almost

Poking around the GeoNames site tonight I noticed that they've added a new API method called findNearbyStreetsOSM. You pass it a latitude and a longitude and it returns a list of nearby steet names and their geometries derived from OpenStreetMap data.

It occurred to me that this was a lot like the thing that Andrew Turner threatened to build for me to help generate pirate maps two years ago, at FOSS4G, as we crossed the street on the way to the bar.

The GeoNames API is not perfect because it's still just returning a mechanical representation of all the streets around a point but it's a good start. If nothing else it's easy to imagine using it to pre-populate a pirate map and then adding controls to remove streets simply by clicking on them. It would be nice if the API passed along the OSM node or way IDs associated with a line segment since those would be easier to pass around than the actual line coordinates but small steps, and all that.

Here's some simple code to generate static pirate maps that I wrote to test the idea:

import urllib2 import json import Geohash # These are non-standard Python installs import ModestMaps import modestMMarkers height = 640 width = 800 lat = 37.759729 lon = -122.427283 zoom = 16 threshold = .1 # Fetch street data from GeoNames req = 'http://ws.geonames.org/findNearbyStreetsOSMJSON?lat=%s&lng=%s' % (lat, lon) res = urllib2.urlopen(req) data = json.loads(res.read()) # Pull out the line data for the streets nearby # (that don't exceed the threshold set above) # and parcel them in to simple buckets based on # street type lines = { 'big' : [], 'medium' : [], 'small' : [] } for s in data['streetSegment']: if float(s['distance']) < threshold: line = [] for pt in s['line'].split(','): ln, lt = pt.split(' ') line.append({'latitude' : float(lt), 'longitude' : float(ln) }) key = 'small' if s['highway'] in ('primary', 'secondary'): key = 'big' elif s['highway'] in ('tertiary', 'residential'): key = 'medium' else: key = 'small' lines[key].append(line) # Render a base map using ModestMaps and convert it to greyscale provider = ModestMaps.builtinProviders[ 'OPENSTREETMAP' ]() loc = ModestMaps.Geo.Location(lat, lon) dims = ModestMaps.Core.Point(width, height) mm_obj = ModestMaps.mapByCenterZoom(provider, loc, zoom, dims) mm_img = mm_obj.draw() mm_img = mm_img.convert('L') mm_img = mm_img.convert('RGBA') # Draw each bucket's worth of street lines, adjusting # the line width and opacity based on street type markers = modestMMarkers.modestMMarkers(mm_obj) if len(lines['big']): mm_img = markers.draw_lines(mm_img, lines['big'], line_width=10, opacity=.8) if len(lines['medium']): mm_img = markers.draw_lines(mm_img, lines['medium'], line_width=5, opacity=.6) if len(lines['small']): mm_img = markers.draw_lines(mm_img, lines['small'], line_width=2, opacity=.4) # Encode the latitude and longitude of the map's center point # as a Geohash and append it the image's filename. fname = "piratemap-%s.png" % Geohash.encode(lat, lon) mm_img.save(fname)

In the next few days I will try to get around to adding support for this in both the I Am Here map and ws-pinwin. I also want to think about generating, both live (read: on my phone) and static, piratewalks

: Pirate maps that follow you and grow as you move around. Here's a quick and dirty example using a (GPX) track file that was generated as I went for a walk the other day, but you could just as easily use your Flickr photos or foursquare checkins (and so on...) as the input the same way you might use a different kind of pen or marker to scribble out a map by hand.

See also: proximate spaces.

This blog post is full of links.

#piratewalksfrom diy import dwim

This morning I came across an Android app called Simple Reverse Geocoder, which does pretty much what it sounds like: It translates the location recorded by the built-in GPS unit in to a street address. At a glance it seems to use Google's built-in APIs which are fine but a bit sterile, creepy and incorrect all at the same time. The problem with relentlessly precise street-level geocoding is that it doesn't leave any room for manoeuvring both literally and figuratively.

One of the reasons that I finally decided to buy a Nexus One is the Android Scripting Environment which allows access to most of the interesting device-level APIs from languages that are (not Java): location information, the speech synthesizer, the file-system and so on. The APIs aren't a complete mapping of what available in Java and the documentation is a bit sketchy but mostly it just works and it's actually easy to install custom-written code on the phone.

Since reverse geocoding is still a subject near and dear to me, I wrote a dumb little Python script that uses Flickr's reverse geocoder to find your location, display the name and open its Places page in the web browser. Here it is, without any kind of error-handling so all the usual caveats apply.

import android import Flickr.API import json import time import random import sys api_key = 'YER_FLICKR_APIKEY' api_secret = None droid = android.Android() api = Flickr.API.API(api_key, api_secret) # I don't know why it's calledmakeToast— they're # little non-modal dialogs that fade away after a few seconds droid.makeToast('Asking the sky where you are. This might take a few seconds...') droid.startLocating() time.sleep(random.randint(5, 10)) loc = droid.readLocation() droid.stopLocating() lat = loc['result']['latitude'] lon = loc['result']['longitude'] droid.makeToast('Found you! Now, asking Flickr where %s, %s is in human-speak...' % (lat, lon)) req = Flickr.API.Request(method='flickr.places.findByLatLon', sign=False, lat=lat, lon=lon, format='json', nojsoncallback=1) res = api.execute_request(req) data = json.loads(res.read()) name = data['places']['place'][0]['name'] woeid = data['places']['place'][0]['woeid'] droid.makeToast('You\'re in %s. Let\'s look at some photos from there!' % name) places_url = 'http://www.flickr.com/places/%s#,recent' % woeid droid.view(places_url) sys.exit()

Even better, though, is the fact that you don't even need a high-level language like Python to do this on your phone anymore. You can do it straight from a web browser but the more the merrier. Everything should be conceptually as simple as the View Source rule. I've already written a little app that plays both Nagios and Ganglia on TV, sitting in the background and polling for stats every 60 seconds and speaking to me (read: waking me up when I'm asleep) if condition x is triggered. That kind of freedom to tinker is pretty great.

And just for kicks, here's the infamous Twitteradio app I wrote for Series60 Python three years ago in a mere 12 lines of code. In other words: Still just as annoying but I am a simple man...

import android import urllib2 import time import json droid = android.Android() url = 'http://twitter.com/statuses/public_timeline.json' while True: res = urllib2.urlopen(url) data = json.loads(res) for tw in data: droid.speak(tw['text']) # let the phone finish speaking time.sleep(len(data))

This blog post is full of links.

#pydroidArranging Moments

I made some new things.

One of them's been kicking around for a while, the

product of a couple failed attempts and years of frustration with the From Your Contacts

page on Flickr. The other is

aggressively simple but also the thing everyone who

works on the Flickr engineering team jokes about building, sooner or later.

(If you don't believe me just ask Myles.)

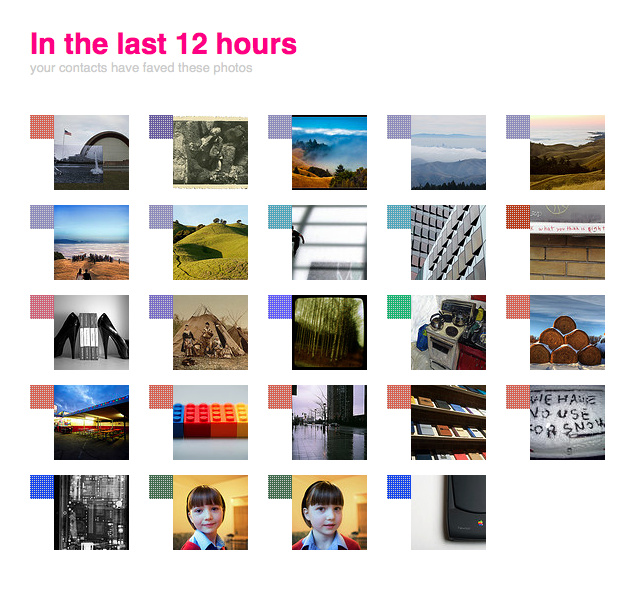

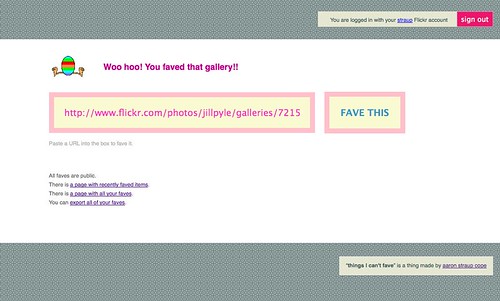

They are Contacts Who've Faved and Things I Can't Fave, respectively.

The first shows you recent photos (like the last 6 to 12 hours) that your contacts have favourited. The second is a place to favourite things that can't normally be faved on Flickr: sets, collections, galleries, groups, other Flickr users and comments on photos.

They are both web apps built on top of AppEngine, using FlickrApp.

The first is basically awesome. I've never really used groups on Flickr which is a big part of the way that people have discovered new photos. Most of my photos are public but my I tend to stick close to home and the things that really interest me are the things that people I know, or whose work I enjoy, like: their favourites. (This is one of the reasons that, internally, Galleries were often confused with or assumed to be just sets of faves

but that's a story for another day.) The best part is not that I end up faving the same things my contacts do, but that I end up making the person who took a photo that my contact faved a new contact. And then their faves show start to show up in Contacts Who've Faved!

I have added as many, or more, new contacts — as in I have no idea who you are but I really like your work and would like to see more of it and not just collect your buddyicon — in the 6 or 7 months I've been tinkering with this as I have in the last 2 or 3 years.

The second is, in some respects, nothing but a series of big lists with RSS feeds (and a Twitter account). It's a little bit of a joke except for the part where everyone really wants to be able to favourite comments. So, in the same vein as Fake Subway APIs I built a dumb little site where you could. There's no reason you couldn't have done the same thing on top of del.icio.us but there's something to be said for a very focused bespoke application that is more concerned with functionality than abstraction; Thing I Can't Fave is about people doing (or not...) stuff on Flickr even if, under the hood, it is just buckets of search.

It's still a bit clunky to use: You need to copy and paste URLs from Flickr in to a form on the (thingsicantfave) site which is not ideal. There's a handy bookmarklet to try and take some of the pain away but since there's still no proper API for third-party applications to use there's no getting around a little bit of clicking and confirming.

That said, it did allow me to discover a wonderful photoset of breakfast faces that Matt faved while I was asleep last night.

There's also a dancing egg.

That brings to five the number of bespoke Flickr applications I've built using the API and AppEngine:

- Flickr For Busy People which displays a list of your Flickr contacts who have uploaded photos in the last 30 minutes, two hours, four hours and eight hours

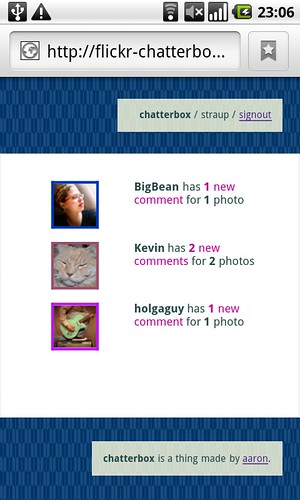

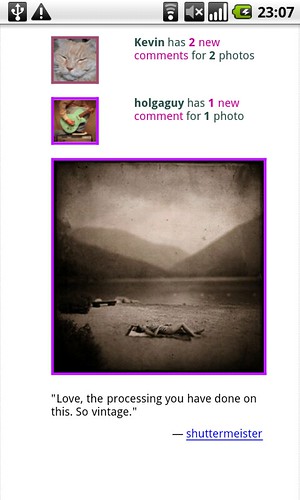

- Flickr Chatterbox which displays recent comments that have been added to your Flickr contacts photos.

- Contacts Who've Faved which displays recently faved photos from your contacts.

- Things I Can't Fave allows you to favourite things you can't otherwise fave on Flickr. Things like sets, collections, galleries, groups comments and even other Flickr users

- Suggestify allows you suggest locations (geotags) for photos on Flickr.

The first three work by rolling up a whole bunch of data (you know, photos) and sorting them by a username. That puts the focus back on the individual rather than the photos but I find it useful as a way to make a first pass through the volume of stuff that comes through Flickr every day. If someone uploads 300 photos all at once I can probably guess they've just gotten back from vacation or a day trip. If they're uploading something new every 30 minutes then I'll probably start to wonder what they're up to. The important part is not how people make those distinctions but that they can. And the really important part is that hopefully stuff like this can help some number of photos that would normally get washed away in the firehose bubble up, however briefly.

I also like that they are separate and distinct applications, where each site is a different kind of filter for building a new table of contents

back in to Flickr itself. Things I Can't Fave and Suggestify store data but only out of necessity. The rest are, by design and motivation, nothing more a lens to arrange moments (in the Flickr world) and allow people the space to create small bridges between them. Or, in simpler terms: A reason to hit the reload button.

Enjoy!

This blog post is full of links.

#momentsAgency

is the Intelligent Design of the Internet

I was lucky enough to be invited to attend Microsoft's Social Computing Symposium last week month. Highlights for me were Kevin Slavin's epic talk about hiding in plain sight, Usman Haque getting people worked up enough to actually shout out loud what they were thinking instead of emoting pithy Twitter messages in silence and Alex's beautiful shout-out to the deep city

.

Not everyone spoke but we were all encouraged to pitch an Ignite-style talk and we voted on those we'd hear. Mine didn't make the cut which is probably best because I'm not sure I was in any state to pull off weaving together the stampede of ideas I've been tossing around in the margins, the long and twisty conversations with Kellan and the increasingly cryptic notes to myself.

I still haven't managed to form a coherent shape of things but since I don't think the circumstances are going to permit that to happen any time soon I'm just going to toss it all out (t)here as is. The broad theme of the symposium was cities, in that way that everyone is thinking about what it means to be paving the world, in both the private and public sectors, with sensors and a network infrastructure for broadcasting all that data.

If you're hoping for a coherent narrative to follow, you'll probably be disappointed. If nothing grabs you then, just as an exercise, try taking the headers as a starting point for a different five-minute talk and let me know where it takes you.

This is the story I would have told (to Myles).

Data, not answers.

Were it not for that terrible article, in an early issue of Wired, about intelligent agents

running around buying you plane tickets while you slept would we still be so hung up on the notion of computers being able to second guess what you're thinking?

I've been talking about the notion of data not answers for a long time. Another way of describing that process is small tools for self-organization, which proved to be a pretty successful approach at Flickr. Computers are still dumb. We are better served by building systems with lots of nubby bits — rough surfaces that allow people grab on and that collect ephemera as they travel through time — and opportunities for people to re-arrange them rather than designing automated thought-leaders.

Rough surfaces and Diebold meters.

There was a great story, in the New York Times, about how people in California are bucking at the smart-meters they've been asked to install because they don't believe they are being fairly billed.

PG&E attributes the higher bills that some consumers complain about to recent rate increases and to quirks in California’s pricing system. Electricity in the state is priced in so-called tiers: consumers get the first few hundred kilowatt-hours at a low rate, but the next few units of consumption are billed at a high rate. A small increase in use can therefore result in a big increase in the bill, the utility says.

Which got me wondering if all smart meters will be mandated to display a new license agreement every time there's a price change. This happens to me all the time in iTunes when I try to buy something: I have to stop what I'm doing, click through yet another EULA presumably because Apple's cut a new deal with producer-X and then go back to and click buy again. Can you imagine having to do that every time you turn on the lights?

Text vesus Stuff.

Joe Gregario wrote a really interesting post about how CCDs are basically p0wning RFID because they're cheaper and easier to use. They are the 80 to RFID's 20.

Text — plain old words — seems to occupy a similar territory.

The Eixample is not a useful parallel.

The problem with talking about Barcelona's Eixample neighbourhood as an example of urban planning and Utopian community-building is that it's failed on almost every level. Born of sturdy, well-meaning, community-minded ideals it was never finished, the original designs (three stories to a building instead of the five that exist today and beautiful interior courtyards) were abused beyond recognition and the Diagonal (the boulevard that runs through it) quickly became a class-based dividing line cutting the entire thing in half.

It's also a pretty awful part of Barcelona to spend any amount time in since it's nothing but block after block of enormous buildings. The part where they're all shaved at the corners gets old pretty quickly. It's worth noting that when the city embarked on another large-scale redevelopment in the 1980's they spent most of their time building lots of small, very simple, parks and public squares scattered throughout the entire city.

They are the pockets that make being in a city the size of Barcelona not just manageable but special.

The role of megalomaniacs.

I don't want to be an apologist for Robert Moses. It's just curious how, after the fact, we may continue to decry the acts of a single person imposing their will (and their ego) on a city but we celebrate the legacy (typically the physical things) those acts leave behind. Moses in New York, Haussmann in Paris, Drapeau in Montréal and so on.

All of this has happened before.

There's often a weird Libertarian streak to these conversations that answers the question of how we'll actually build a shared resource of all this network-sensor love with the invisible hand of the market or self-organizing off-the-shelf DIY can-do resourcefulness. You know, stuff like mesh networks. I've got no problem with ideas like that, in principle, except that there's nothing to demonstrate that it won't be abused and shat all over in practice.

Can you really imagine the people heavily invested in the real estate markets in cities like New York or London or Tokyo operating a shared mesh network that would offer an advantage or even just a level playing field to the people they are competing with? No, me neither.

Which isn't to say it can't be done. In fact, we've done it before. We've built a publicly funded and supervised shared infrastucture that private citizens and commercial enterprises can use: It's called the sewage system and we're the better for it. Say what you want about municipal governments or unions but your toilet still works. Which is to say that we know how to do this stuff but equally that it gets thrown in the ring with all the other needs, wants and priorities that come from living together as communities. It's called public policy and it's not sexy but it's necessary.

Jones' battlesuit is a gateway drug for civic participation.

I wasn't really sure what to make of Matt Jones' The City Is A Battlesuit For Surviving The Future the first time I read it. Eventually I decided that it was really an invitation for people to participate, be involved and to help shape the cities and the communities they live in sung in the form of a space-rock-opera.

People there really are walking architecture.

Augmented reality is boring (or: History Clippy must die).

I look forward to being proven wrong about augmented reality but I'm not ready to hold my breath yet. That's all I will say about it, for now. Instead I'll talk about maps.

It took me a while to warm up to Dolores Park when we moved to San Francisco. It's not the prettiest of parks but, over time, what I began to realize and appreciate about it is that it's one of the few parts of the city where people go to be alone, together. So much of the rest of the city is a designed experience

whether it's an attempt to get you to open your wallet or, in the case of San Francisco, some place you can take your dog and talk to other people with dogs.

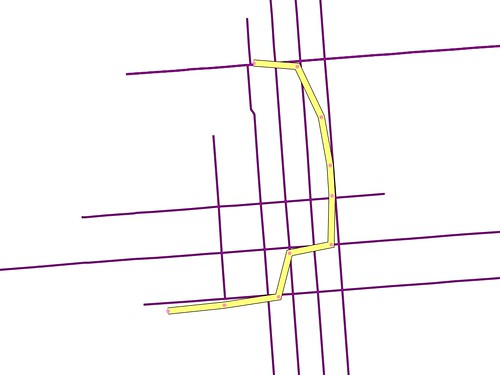

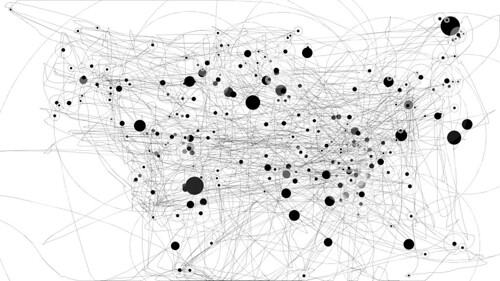

I want more things like Dolores Park, things that embrace the quiet rather than the firehose of ubiquitous broadcasting that is all the rage these days. I want maps like that. I want a map my neighbourhood, or a city I'm visiting, that is just the history of the places the people I know have been. The kids over at UrbanTick have been poking at this idea for a while but it's still a bit wonkish. I want something like Cabspotting for my friends and I want it to be simple.

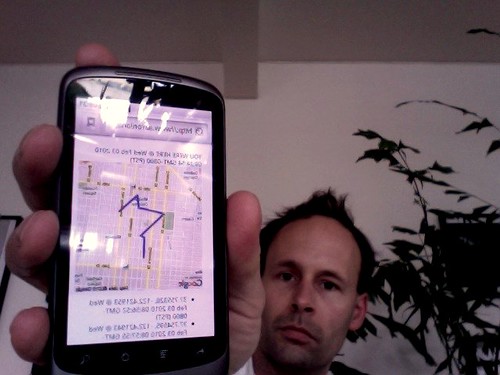

So I built a poor man's web-based GPS recording device using my phone and the geolocation and local storage APIs that are starting to become available in newer web browsers. Translation: I load a web page that periodically asks the GPS/WiFi robots on my phone where I am and then store that information locally and display a history of my movements on a map.

Here's me walking to work:

Here's me taking a picture of my phone because it's too fucking stupid to know how to take screenshots of itself...

As it's written today there's a export

button that will dump your location history to the screen. In the future I'd like to be able to push all that data to a proper web application where all that data can be aggregated and shared and made more than the sum of its parts. You know: To be alone, together.

There's also a purge history

button if you're wondering.

It's not perfect. It's not even awesome... well, it's a little awesome. It's definitely the toolset I've been waiting for since the infamous DOOM-DOOM-DOOM geo panel at SXSW in 2007. The part where it just works in a web browser, though, and I don't have to ask people to pay for or install random device-hardware-manufacturer specific crap: That is awesome.

There are still plenty of mistakes

like the one where I didn't really duck down to South Van Ness at 18th. My best guess is that's where the nearest cell tower is (the same thing happened on the way home) when you're standing two blocks away waiting for the street light to change. For now, I'm okay with that. It's good enough and I'm betting we can do something interesting with those hiccups.

Right now, I'm trying to decide if I want to run my own infrastructure for this stuff. From a privacy and creepiness factor it makes the most sense. At least for me. Maybe for anyone else, it's an equal kind of toss-up whether they'd be comfortable handing over their location data to me or to someone like Google. Whatever else you want to say about the GOOG they've gotten better and closer at nailing the administrivia involved in setting up these kinds of bespoke applications, with tools like AppEngine. Mike suggested just storing everything in OpenStreetMap and I considered abusing Delicious (again).While it's tempting in a street-finds-it-own-use kind of way both approaches also seem a bit mean-spirited and run counter to the goals of either service so I'm punting on those for now.

Maybe it's possible to continue to use AppEngine but do all the posting over HTTPS while also pulling a Wesabe and encrypting any data, not explicity made public, on the way in to the giant all-seeing Google database in the cloud. I don't know yet. I built this over morning coffee, yesterday. I am writing this blog post over morning coffee, today.

If all of this sounds strangely familiar and prescient it's because Tom Coates was right.

So far, this has been tested using the browser on the Nexus One and Firefox 3.6. I don't know whether it will work with other Android phones or Maemo. There's at least one geolocation plugin for the default browser on the N900 and I think that the geolocation and local storage stuff is part of Fennec, which is Firefox en-mobilized. It does not work in Safari or mobile Safari yet, at least not on my ancient iPod Touch. I'd love to know whether it works on newer iPhones.

As an aside, using and starting to program for the Nexus One is basically like writing bespoke Python applications for Nokia, before the Symbian 9 / Series60 v3 catastrophe (read: 2005/2006), but with better hardware which should give you some idea of just how huge a lead Nokia squandered. Also, I can not begin to tell you how much the camera on the Nexus One sucks soggy rocks. That was always my suspicion looking around at the reviews. I charged ahead anyway. The Nexus One does not move the Earth but it's pretty good and easier to tinker with than any of the alternatives. The part where the camera sucks makes me reconsider at least once a day, though.

You can try it for yourself at:

http://www.aaronland.info/followme/

As always, the source code is available over on GitHub.

This blog post is full of links.

#agency