The Medium is the Message (and pubsocketd)

This was a blog post originally written for the Cooper Hewitt Labs weblog and published in August, 2014.

Have you ever wanted to see a real-time view of all the objects that people are looking at on the collections website? Now you can!

At least for objects with images. There are lots of opportunities to think about interesting ways to display objects without images but since everything that follows has been a weekends-and-mornings project we’ve opted to start with the “simple” thing first.

We have lots of different ways of describing media: 12, 865 ways at last count to be precise. The medium with the most objects (2, 963) associated with it is cotton but all of these numbers are essentially misleading. The history of the cataloging of the collection has preferenced precision and detail over the kind of rough bucketing (for example, tags) that lots of people are used to these days.

It’s a practice that can sometimes seem frustrating in the moment but, in the long-run, we’re better served for it. In time we will get around to assigning high-level categorizations for equally high-level browsing but it’s worth remembering that the practice of describing objects in minute detail predates things like databases, which we take for granted today. In fact these classifications, and their associated conventions and rituals, were the de-facto databases before computers or databases had even been invented.

But 13,000 different media, most of which only describe a single object, can be overwhelming. Where do you start? How do you know what to look for? Given the breadth of our collection what don’t we have? And given the level of detail we try to assign to objects how to do you whether a search doesn’t yield any results because it’s not in our collection or simply because we’re using a different name for the same thing you’re looking for?

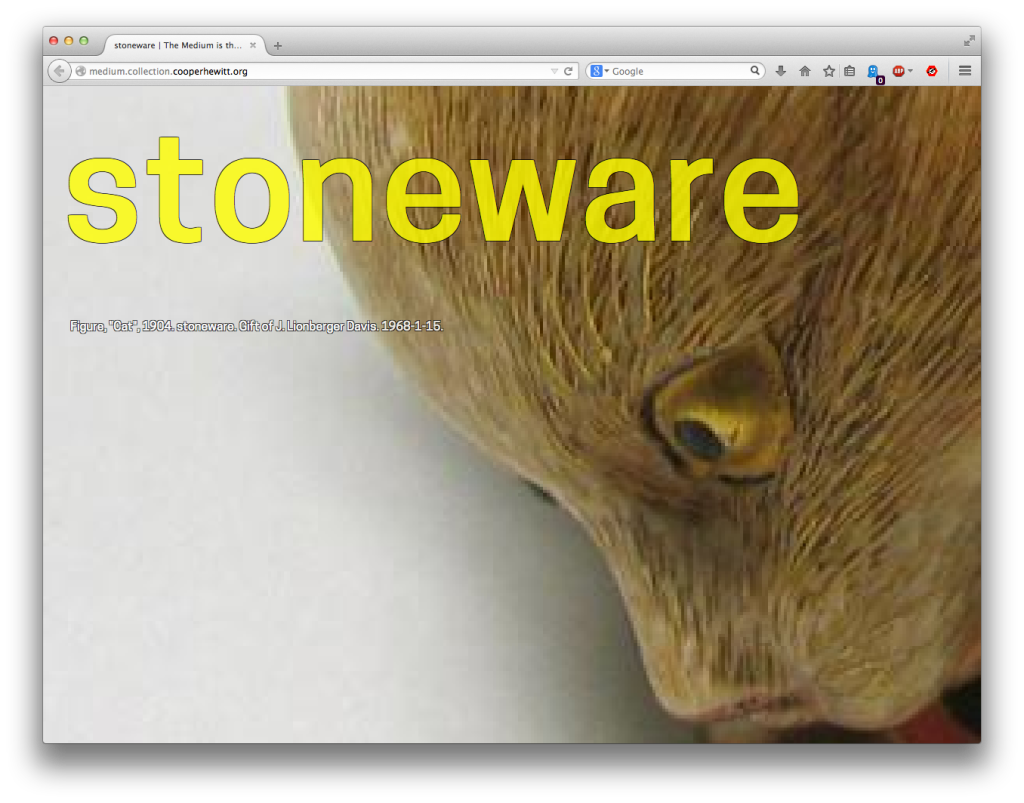

This is a genuinely Big and Hairy Problem and we have not solved it yet. But the ability to relay objects as they are viewed by the public, in real-time, offers an interesting opportunity: What if we just displayed (and where possible, read aloud) the medium for that object?

That’s all The Medium is the Message does: It is an ambient display that let you keep an eye on the kinds of things that are in our collection and offered a gentle, polite way to start to see the shape of all the different things that tell the story of the museum. It’s not a tool to help you take a quiz so much as a way to absorb an awareness of the collection as if by osmosis. To show people an aspect of the collection as an avenue to begin understanding its entirety.

We’re not thinking enough about sound. If we want all these things to communicate with us, and we don’t want to be starting at screens and they’re going to do more than flash a couple of lights, then we need to work with sound. Either ‘sound effects’ that mean something or devices that talk to us. Personally, I think it’ll be the latter morphing into the former. And this is worth thinking about because it’s already creeping up on us. Self-serve checkouts are talking at us, reversing trucks are beeping at us, trucks turning left are barking at us, incoherently – all with much less apparent thought and ‘design’ than we devote to screens.

— Russell Davies, the internet of talking

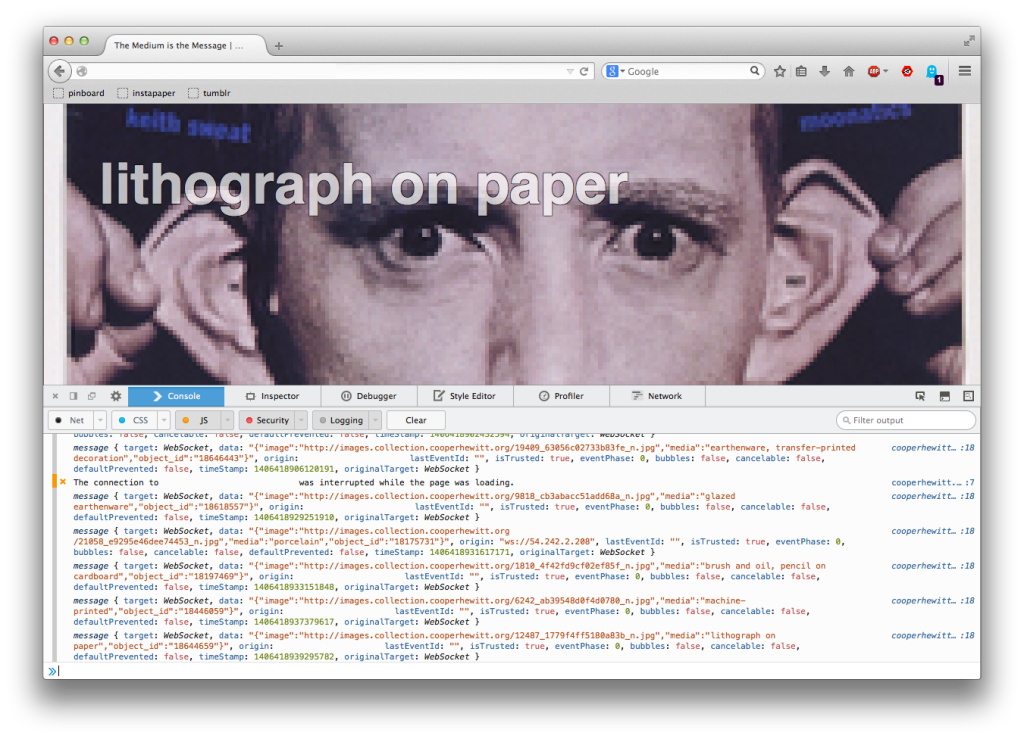

While The Medium is the Message is a full-screen application that displays a scaled-up version of the square-crop thumbnail for an object it also tries to use your browser’s text-to-speech capabilities to read aloud that object’s medium. It may not be the kind of thing you want playing in a room full of people but alone in your room, or under a pair of headphones, it’s fun to imagine it as a kind of Music for Airports for cultural heritage.

Text-to-speech is currently best supported in Chrome and Safari. Conversely the best support for crisp and pixelated image-rendering is in Firefox. Because… computers, right?

For the time being The Medium is the Message lives in a little sand-box all by itself over here:

http://medium.collection.cooperhewitt.org

Eventually we hope to merge it back in to the main collections website but since it’s all brand-new we’re going to put it some place where it can, if necessary, have little melt-downs and temper-tantrums without adversely affecting the rest of the collections website. It’s also worth noting that some internal networks – like at a big company or organization – might still disallow WebSockets traffic which is what we’re using for this. If that’s the case try waiting until you’re home.

And now, for the Nerdy Bits: The rest of this blog post is captial-T technical so you can stop reading now if that’s not your thing (though we think it’s stil pretty interesting even if the details sound like gibberish).

The Medium is the Message is part of a larger project to investigate a few different tools in order to understand how they might fit together and to what effect. They are:

- Redis and in particular its implementation of Publish/Subscribe messaging paradigm – Every once in a while there’s a piece of software that is released which feels like genuine magic. Arguably one of the last examples of this was memcached originally written by Brad Fitzpatrick, for the website LiveJournal and without which entire slices of the web as we now know it wouldn’t exist. Both Redis and memcached are similar in spirit in that their feature-set is limited by design but what they claim to do Just Works™ and both have broad support across the landscape of programming languages. That last piece is incredibly important since it means we can use Redis to bridge applications written in whatever language suits the problem best. We’ll return to that idea in the discussion of “step 0″ below.

- Websockets – WebSockets are a way for a web browser and a server to create and maintain a persistent connection and to shuttle messages back and forth. Normally the chatter between a browser and a server happens akin to the way two people might send each other postcards in the mail and WebSockets are more like a pair of teenagers calling each on the phone and talking for hours and hours and hours. Sort of like Pub/Sub for a web browser, right? WebSockets have been around for a few years now but they are still a bit of a new territory; super-cool but not without some pitfalls.

- Go – Go is a programming language from the nice people at Google, that recently celebrated its fourth anniversary. It is part of growing trend in language design to find a middle ground between loosely typed languages, and the need to develop stable applications with a minimum of fussiness. Go is probably not the language we would develop a complex user-facing application in but for long-running services with well-defined boundaries it seems kind of perfect. (Go’s notion of code-based channels are a fascinating parallel to both Pub/Sub and WebSockets but that’s a whole other blog post.)

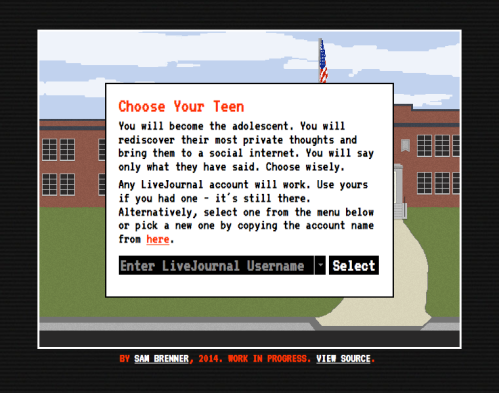

Fun fact: The Labs’ very own Sam Brenner‘s ITP thesis project called Adventures of Teen Bloggers is an archive of old LiveJournal accounts in the shape of an 8-bit video game!

In order to test all of those technologies and how they might play together we built pubsocketd which is a simple daemon written in Go that subscribes to a Pub/Sub channel and ferries those messages to a browser using Websockets (WS).

- Listen for messages from a specific (Redis) Pub/Sub channel

- Accept incoming WS requests

- Shuttle any messages from the Pub/Sub channel to all the open WS connections

That’s it. It is left up to WS clients (your web browser) to figure out what to do with those messages.

$> ./pubsocketd -ws-origin=http://example.com 2014/08/01 17:23:38 [init] listening for websocket requests on 127.0.0.1:8080/, from http://example.com 2014/08/01 17:23:38 [init] listening for pubsub messages from 127.0.0.1:6379 sent to the pubsocketd channel 2014/08/01 17:23:44 [10.20.30.40][10.20.30.40:56401][handshake] OK 2014/08/01 17:23:44 [10.20.30.40][10.20.30.40:56401][request] OK 2014/08/01 17:23:44 [10.20.30.40][10.20.30.40:56401][connect] OK 2014/08/01 17:23:53 [10.20.30.40][10.20.30.40:56401][send] OK 2014/08/01 17:24:05 [10.20.30.40][10.20.30.40:56401][send] OK # and so on...

The “step 0″ in all of this is the ability for the collections website itself to connect to a Redis server and send a Pub/Sub message, whenever someone views an object, to the same channel that the pubsocketd server is listening to.

This allows for a nice clean separation of concerns and provides a simple way for related, but fundamentally discrete, applications to interact without getting up in each other’s business.

Given the scope of the project we probably could have accomplished the same thing, with less scaffolding, using Server-Sent Events (SSE) but this was as much an exercise designed to get our feet wet with both WebSockets and Go so it’s been worth doing it the “hard way”.

Matthew Rothenberg, creator of the popular EmojiTracker, was nice enough to open-source the Go-based SSE server endpoint he wrote to feed his application and we may eventually re-write The Medium is the Message, or future applications like it, to use that.

We’ve open-sourced the code for pubsocketd under a BSD license and we welcome suggestions, patches and (gentle) clue-bats:

https://github.com/cooperhewitt/go-pubsocketd

Enjoy!

This blog post is full of links.

#medium